Are you there Grok? It's me, Margaret

AI as a centralizing technology

Grok, is this true?

This question is asked thousands of times a day on Twitter as users fact-check, question, and prod various claims made on the social media platform by asking an AI bot to confirm or reject what another user is saying.

Yesterday, one user used it to check whether HVAC companies really “mark up refrigerant somewhere between 1000%-2000% of their cost” (true); whether Keir Starmer and Bill Gates were trying to eliminate livestock by 2030 (false); and to check the veracity of a sports reporter’s tweet about an NBA player’s postgame comments (also true).

The fear of losing shared facts has loomed large in the age of AI. In 2024, after a scandal over Kate Middleton allegedly photoshopping a picture of her family, The Atlantic’s Charlie Warzel wrote that “for years, researchers and journalists have warned that deepfakes and generative-AI tools may destroy any remaining shreds of shared reality.”

According to Warzel, in 2024, we were already living in a “post-truth universe” where it’s “difficult for anyone to believe anything they didn’t witness themselves.”

Many others have made similar arguments.

My expectation, too, was that AI would exacerbate this problem—that just as social media, photoshop, and video editing technology had proliferated deepfakes on the internet—for instance, tricking people into believing a faked photo of Pope Francis wearing a Balenciaga jacket or, in less funny development, into losing hundreds of thousands of dollars—AI was set to make all of that even worse.

But I’ve come to suspect AI might have the exact opposite effect. Instead of fracturing our shared reality, this handful of AIs seems to be piecing it back together.

Centralizing vs. decentralizing technologies

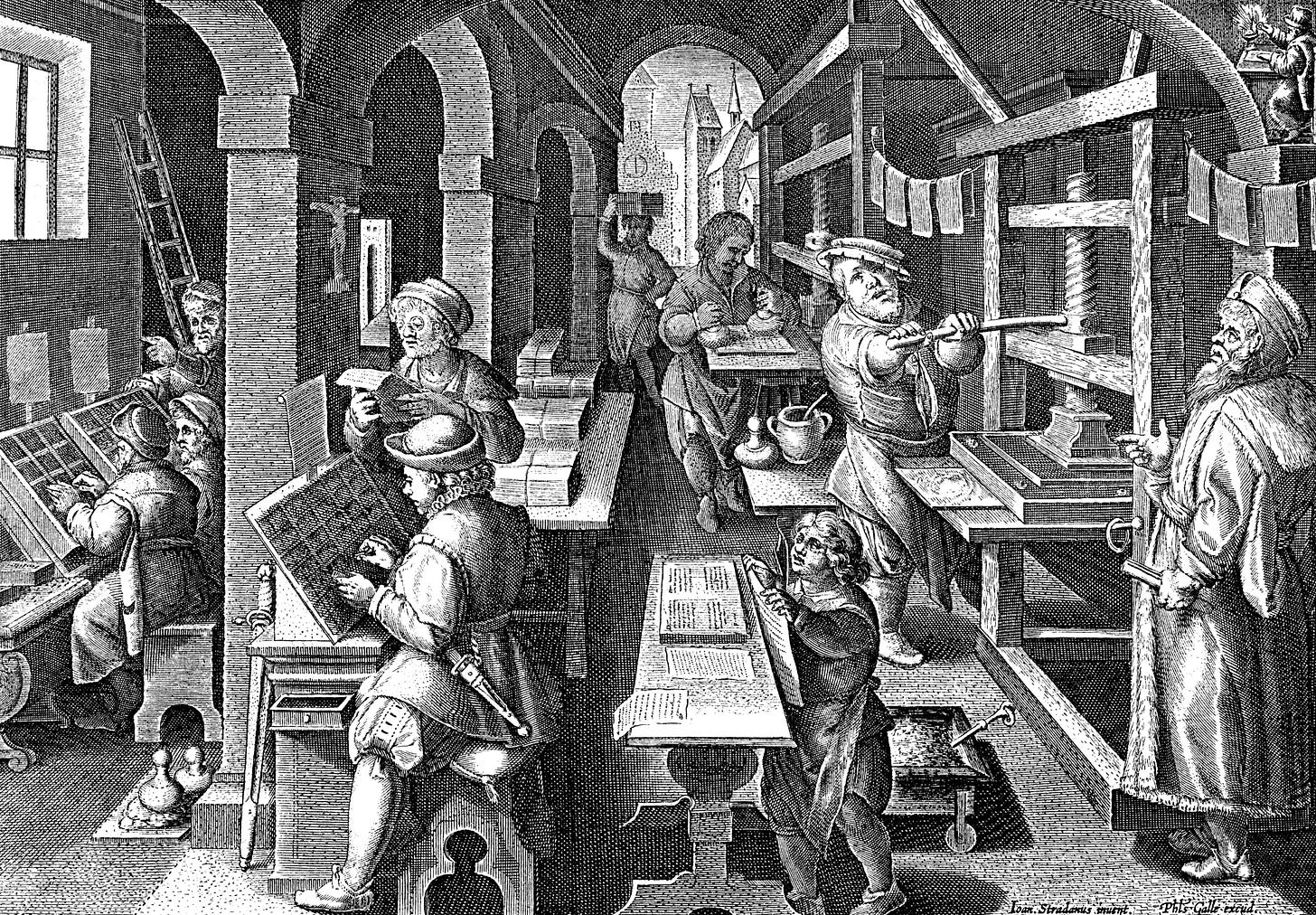

The printing press was invented in 1440.1 Before that, books were produced by hand, which made them incredibly expensive and meant that, even if it had been attempted, mass literacy was functionally impossible. According to one study of Western European medieval literacy, less than 20% of the population could read.

During the 1300s, all of Western Europe produced 2.7 million books. Compare that to the 12.5 million printed books produced from 1454 to 1500 alone. The following century saw 217 million books produced, and the rest is history.

Many people expected the printing press to act as a decentralizing force; after all, once the cost of producing written text had gone down, many more people could print what they wanted. The cost of producing books fell by 67% in the half-century after the invention of the printing press.

Contemporaneous thinkers were both terrified and excited by the prospect of less centralized control over the written word.

John Foxe, an English clergyman from the 1500s, reported that the Catholic vicar of Croydon, preaching at Paul’s Cross under Henry VIII, argued that “either the Pope must abolish knowledge & printyng, or printyng at length will roote him out.”

Foxe explained that the Pope’s primacy was based on the unsophistication of Christians and that printing would change all that: “nothyng made the Pope strōg in time past, but lacke of knowledge, and ignoraunce of simple Christians: so contrarywise now nothyng doth debilitate and shake þe hie spire of his Papacie so much as reading, preaching, knowledge and iudgement, that is to saye, the fruite of printyng.”

On the other side, William Tyndale, a leader in the Protestant Reformation who would soon use the new technology to print thousands of New Testaments and smuggle them into England, crowed over the possibility of overthrowing the papacy:

“I defy the Pope and all his laws. If God spare my life ere many years, I will cause the boy that drives the plow to know more of the scriptures than you.”

Twentieth-century historiography has repeated this argument. Most famously, Elizabeth Eisenstein’s landmark book The Printing Press as an Agent of Change contends that print culture produced books that were “internalized by silent and solitary readers” and gave rise to a “voice of individual conscience.”

Certainly Martin Luther’s pamphlet revolution against the Catholic Church would have been impossible without the printing press, but to see only the press’s decentralizing effects is to miss how it also enabled the creation of common cultures and even the administration of more complex states.

Printing, the historian James Simpson has argued, “also permitted and produced a much tighter, centralized surveillance of written production.” The new technology sped up the “formation of a common linguistic standard” as well as “common cultural standards.” Many varieties of English were lost as a London standard became dominant through the written word.

During Henry VIII’s Dissolution of the Monasteries in the 1530s, English monasteries, with their libraries and manuscript production, were destroyed. This meant that the production, trade, and storage of books were centralized in London, Oxford, and Cambridge.

Perhaps most importantly, without the printing press, the modern nation state and economy would be inconceivable. Imagine all the paper required to keep track of tax collection, laws, and other various administrative tasks. Centralized record-keeping, standardized law, and uniform currency all depended on cheap, reproducible text.

Back to the future: How AI could reconstruct our shared reality

It’s not just Grok.

Google, which has been the dominant form of information discovery for the past two decades, has seen its users shift their behavior since the inclusion of AI-generated answers from Gemini at the top of its search results.

According to a Pew Research Center study of user behavior in March 2025, when an AI overview appeared in search results, users were half as likely to click on a link. Moreover, users ended their browsing session entirely after seeing an AI overview 26% of the time compared to 16% of the time without one.

A more recent study that looked at user behavior between January and February of this year finds that AI overviews led to a 38% reduction in outbound clicks and that zero-click searches rose from 54% to 72% when AI overviews were shown. If people are systematically substituting AI answers for the decentralized web of sources they previously consulted, that’s a structural change in information consumption that matters regardless of what individuals tend to use AI for.

A poll from last summer, also from Pew Research Center, found that the majority of adults who had seen AI summaries in search results found them at least “somewhat” useful and had at least some trust in those results.

If large language models continue to be the dominant form of AI, then I expect the technology to have strong centralizing effects.2

For one, even now, it’s underappreciated just how dominant one AI company is in the chatbot game: OpenAI’s ChatGPT has roughly 900 million weekly active users. And while it’s certainly possible for new competitors to enter the market, the amount of GPU infrastructure needed to run a frontier model excludes everyone but the largest companies and nation-states.3

Moreover, the handful of institutional actors that dominate frontier AI development share substantially overlapping training data and training practices because their researchers come from largely the same labor market—a few thousand people, mostly trained at the same institutions, who circulate between OpenAI, Anthropic, Google DeepMind, Meta, and the rest.

Second, the very nature of an LLM —an entity trained on the written word—fundamentally privileges certain sources of information. The largest sources in the dataset used to train Google’s T5 model and Meta’s Llama are Google Patents, Wikipedia, Scribd, The New York Times and PLOS scientific journals. Major newspapers and academic publishers are dramatically overrepresented relative to their share of internet content.

In the post-training process, models are explicitly coached to give answers that align with mainstream expert consensus to hedge and defer to authoritative-sounding framings and avoid fringe positions.

I don’t mean to say that all of this is necessarily positive. The ability to create a shared reality does not mean that shared reality is, uh, true.4

One could imagine that the dominant AI could be trained to be hostile to basic liberal democratic values or could be made to accept and promote arguments hostile to freedom and equality.

This is part of why so many American technologists are obsessed with beating China in the AI race and why many federal policymakers are willing to risk the labor market impacts and other unknown risks to help clear their path.

But given how lib-coded books are (and by this I mean liberalism in the Locke/Mill/Rawls open society and individual rights sense), it’s actually quite hard to make LLMs go against mainstream liberal ideas. Elon Musk has been trying to do this with Grok to little success.

Last year, xAI updated Grok to “not shy away from making claims which are politically incorrect” and to “assume subjective viewpoints sourced from the media are biased.” Within days, Grok was praising Hitler, calling itself MechaHitler, endorsing a second Holocaust, using antisemitic catchphrases, and producing graphic rape narratives targeting specific users. Musk and xAI were forced to walk back many of the changes.

Despite these machinations, Grok 4 still scored as left-leaning, even slightly more so than Claude in one analysis. A New York Times analysis found that while Grok had shifted right on government and economy questions, its social responses still leaned left.

The problem facing those who wish AI would reflect right-wing views more is that the training corpus is fundamentally left-leaning. Given the current state of LLM technology, regardless of what fine-tuning and training is done, it is still layered on top of a model that has been trained on a largely English-speaking, liberal corpus of text.

My colleague Kelsey Piper performed an experiment in which she asked the same 15 questions to ChatGPT-4o, Claude Sonnet 4.5, and DeepSeek’s DeepSeek-V3.2-Exp. She found that liberal values are consistent across chatbots and across languages. As Piper discovered, “AIs tend to express liberal, secular values even when asked in languages where the typical speaker does not share those values.”

None of this means the right is doomed. Perhaps a real sustained effort—and a few hundred million dollars—will succeed in creating an anti-woke but useful AI.

The Trump administration is eager to see such a development. Last year, the White House issued a “Preventing Woke AI in the Federal Government” executive order that reads: “AI will play a critical role in how Americans of all ages learn new skills, consume information, and navigate their daily lives. Americans will require reliable outputs from AI, but when ideological biases or social agendas are built into AI models, they can distort the quality and accuracy of the output.”

AI as the anti-social media

Public conversation tends to treat chatbots as the next in a long line of digital communications technologies that have decentralized truth.

The internet, smartphones, and social media all made the production of information cheap and significantly decentralized who could produce it. AI is making the production of information extremely expensive and centralizing who can produce it.

And while, yes, AI hallucinates, the direction of its errors is toward mainstream consensus, not fringe positions. When ChatGPT gets something wrong, it tends to do so in a confused-Wikipedia-editor-misremembering-something-they-once-read kind of way, not in a QAnon-forum-poster-high-on-ketamine kind of way.

The open question is who will get to control the centralizing forces of AI.

All of this might sound a bit rosy to some of the AI skeptics and doomers, but I think of it rather as reframing what the real risks of AI are. After all, while we all have positive associations with the printing press now, it was not always thus.

For hundreds of years following the invention of the printing press, millions of people died in religious conflicts spurred by the Protestant Reformation. The rise of the nation state enabled the mass slaughter of millions in world wars, not to mention brutal colonial empires. Modernity and the industrial revolution came after decades of conflict over who got to control the canonizing institutions.

Perhaps humans in 3500 A.D. will look back on the era of AI with fondness for enabling even greater technological and social complexity, but that doesn’t mean our lifetimes will be all that pleasant.

Recommended reading

Creative destruction is a miracle. It’s also a political problem.

Modern civilization is only possible because we let the fires of technological progress burn bright. But who got burned in the process?

I have learned in the course of researching this piece that the “earliest known moveable type was created in China in the 11th century.”

The big countervailing argument is that billions of individuals all using a handful of AIs may be using them for divergent ends. That is, it’s possible to get an AI to give you the strongest possible argument for or against any position regardless of its truthiness. That’s fair, but I still think that’s more analogous to how the printing press functioned than social media. Divergence at the output layer is not the same as decentralization at the production layer. The printing press famously produced much divergent content, it just had gatekeeping institutions. With AI, those institutions are the handful of models, companies, and overlapping training data.

The cost of running AI models is dropping substantially. Older models can now be run on a single workstation rather than requiring a hyperscale data center, but training these models is a different question.

I think this thesis is maybe even more true of other forms of AI. For example, self-driving cars are taking us from cars owned and controlled by hundreds of millions of individual people to cars controlled by a small number of companies. That will centralize decisions about everything from speeding to route choices. Another example is surveillance. Companies like Flock are building centralized databases of camera footage which is searched using AI tools, with pretty obvious implications for centralization and state capacity (good and bad).

Great piece. (Who doesn't love a stiff dose of sixteenth-century orthography?)

Dan Williams made a similar point in his (speculative) essay "How AI Will Reshape Public Opinion", which is complementary to this, and highly-recommended. Its subhead conveys the gist:

> Social media democratised public opinion, shifting influence away from elites and experts to ordinary people. LLMs will partly reverse this trend. They are a powerful, new technocratising force.

https://www.conspicuouscognition.com/p/how-ai-will-reshape-public-opinion