No, Waymos aren't racist

Let's get fake studies out of the conversation about self-driving cars

“We’re taking The Argument to San Francisco! On May 13, Jerusalem Demsas and I are debating a question that feels unavoidable right now: Is AI actually changing how science gets done, or are we in the middle of a very expensive illusion? She’s bullish; I’m skeptical.

And you won’t just be watching. You’ll get to join in on the argument, too.

Join us May 13 at The Chapel from 7 to 10 p.m. Come argue with us! RSVP here.

Are autonomous vehicles (self-driving cars) “less able to detect people of color”? That’s what I read in The Atlantic this weekend, in Xochitl Gonzalez’s “People Who Don’t Like People Are Making All of Our Decisions.”

It appears to be entirely false.

This took some digging, so please join me on a frustrating detective hunt back through Gonzalez’s chain of citations. She cited the “Union of Concerned Scientists,” which indeed said that “studies have shown that automated vehicles are less able to detect people of color and children,” but which does not link any such studies. Its report doesn’t have a byline, and while I submitted a request for more information to their contact form Monday, I haven’t heard back.

So I searched for research on this topic and found this 2023 article from King’s College London about a paper with exactly this finding: “Driverless cars worse at detecting children and darker-skinned pedestrians say scientists.” The preprint of that paper (released around the time of that article) is titled “Dark-Skin Individuals Are at More Risk on the Street: Unmasking Fairness Issues of Autonomous Driving Systems,” and claims to find a “miss rate difference of 7.52% between the dark-skin and light-skin groups” — that is, other things equal, the systems they tested (which are not even the systems in a Waymo, but we’ll get to that) are 7.52 percentage points more likely to miss a dark-skinned than light-skinned pedestrian.1

The paper was later published in ACM Transactions on Software Engineering and Methodology, but the published version of the paper had a very different finding: nearly identical results on dark-skinned and light-skinned pedestrians. Average miss rate was 30.15% for light skin vs. 29.71% for dark skin, a 0.44-point difference — statistically insignificant.

It’s a bit unusual for a headline finding to change between the preprint of a paper and final publication, but it’s not unheard of. It looks like the authors adopted a better, more advanced image configuration setup, which made the problem go away. Deprived of the authors’ headline finding, the published version of the paper instead reported that image models have a harder time detecting female pedestrians if it is nighttime — which I kind of suspect of being statistical noise since they ran a lot of tests and didn’t correct for multiple comparisons — and a harder time detecting children, which looks like a more robust result and makes sense since children are physically smaller than adults and harder to see.

Is that the only study with this finding? I found one other, “Predictive Inequity in Object Detection,” which is from 2019; image recognition technology has massively advanced since then. Timothy Lee, who also attempted to find the cited “studies” after seeing The Atlantic article, found the same two plus this one, which also found higher miss rates for children but no sex differences (it did not look at race differences).

So, studies do not appear to show that modern vision algorithms are worse at detecting people of color. One study showed that very weak early vision algorithms were worse at that, and then they got better and now show no racial disparities. I am grateful for the hard work that presumably went into making that happen.

All of this is entirely irrelevant to the safety of Waymo. Waymo does not primarily do pedestrian detection through normal cameras and machine learning algorithms that interpret what the cameras are seeing. It has cameras, but it also builds a complete picture of its surroundings with lidar (bouncing a laser around) and imaging radar (from emitting radio waves). Both of those will obviously be race-agnostic, though children will still be harder to see than adults as they take up less space.2

It’s worth pausing here to note that “children are harder to see than adults” is also the case for human drivers. If we’re trying to determine whether self-driving cars are safer for children, the right comparison is to human drivers: Which is more likely to hit a child, a human driver or the Waymo that replaces that human driver?

For that, we have a large and growing supply of safety data from the 240 to 250 million miles that I estimate Waymos have driven.3 During that time, according to the statistics reported by Waymo, it has gotten into 92% fewer crashes that resulted in a serious injury or worse, 92% fewer injury-causing pedestrian crashes, 85% fewer cyclist crashes, and 83% fewer airbag deployments.4

Over at Understanding AI, Timothy Lee and his team periodically provide a breakdown of serious Waymo safety incidents; you should read it yourself rather than just trusting the company’s data, but I find it reassuring about self-driving car safety. The serious crashes in which Waymo is involved are almost never Waymo’s fault.

You can hate Waymos without a good reason

I find myself increasingly wishing that Waymo haters would just say “they’re ugly bug cars and I don’t like technology.” That’s fair enough! Your preferences are legitimate, and while I don’t think they should get to control whether I ride in a Waymo, it’s reasonable for people to be nervous that they will end up, in practice, obliged to use a Waymo, especially once the technology is decisively much better than human drivers.

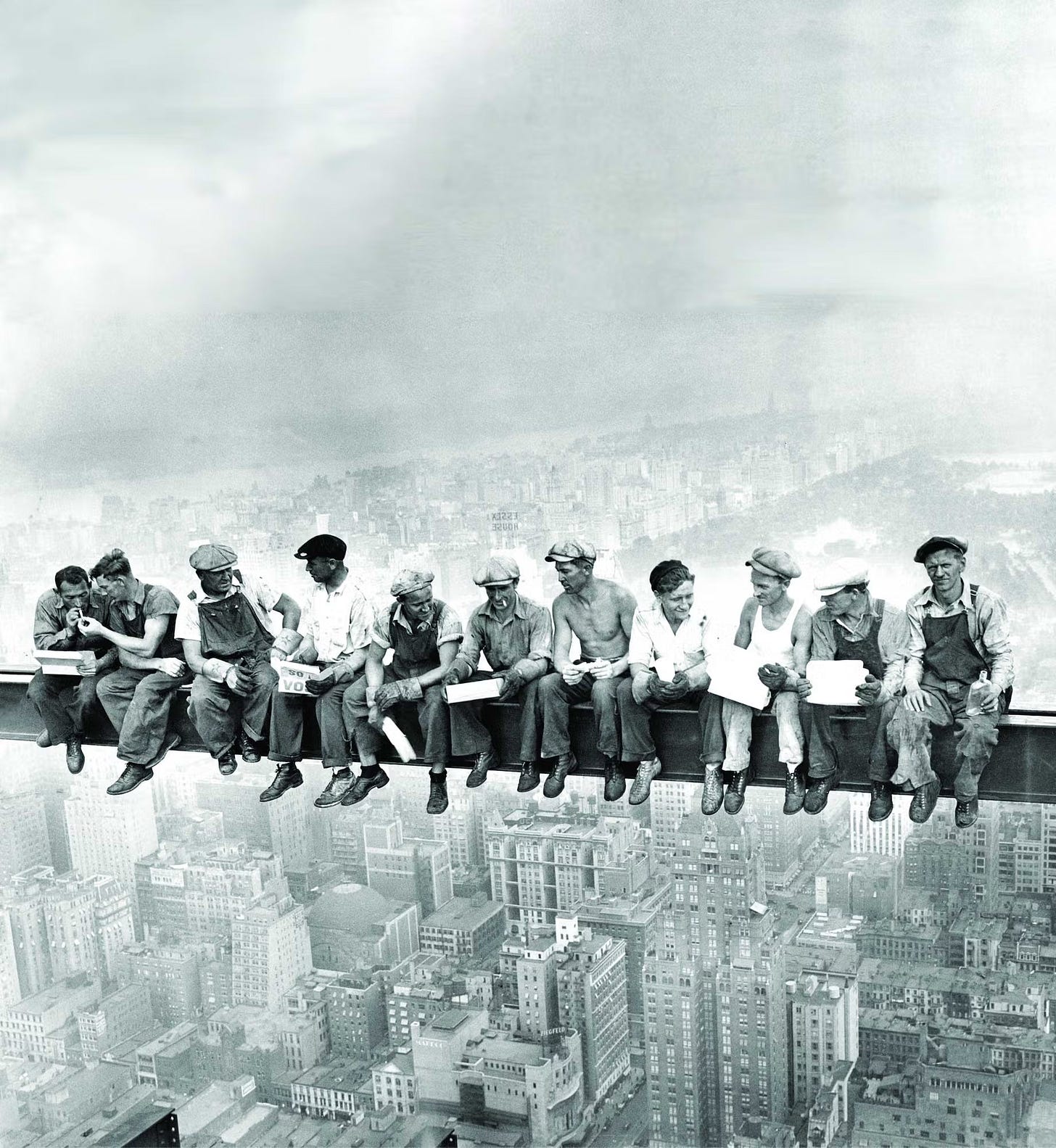

You don’t have to be in favor of all technological change, even lifesaving technological change. Some people pine for the “good old days” when construction workers sat on cranes without safety harnesses. While I don’t think we should return to routinely killing lots of construction workers out of romantic longing for the brave and simple past, I also don’t spend a lot of time going around yelling that this picture is not romantic and is actually quite problematic:

I also, separately, am very pro-gig work, which is a major stabilizing influence on the finances of many low-income Americans because it’s possible to do gig work and get paid same day. When you come up $100 short on rent or bills, none of your options are very good, and “go out and drive deliveries until you have the money” is a new addition to your options that I’m very glad we have. I worry that if those jobs dry up, nothing similarly good will replace them.

So I have sympathy for Gonzalez’s piece: She likes Uber drivers, she likes interacting with other people, and she thinks something important will be lost when we automate their jobs. She feels we’re doing this out of distaste for the messiness of sharing civilization with other people rather than in order to prevent accidents and free up other people’s labor for worthier pursuits.

I don’t feel that way about Waymos in particular, but there are jobs I feel that way about; I don’t, in fact, want to go full speed ahead on replacing all human labor, and I don’t expect a good future to result if we try.

But to make that case, you need to actually bite some bullets. Waymos appear to be much safer than human drivers, so if we ban Waymos and stick to human drivers, a lot of people will needlessly die. A lot of people will spend their time and energy driving others around — time and energy that is effectively wasted. Since it’s not needed to achieve the result of their labors, it is only being required of them as, effectively, a jobs program with very low wages.

We will, as a society, be poorer and die younger if we go down that route.

Maybe you think that’s worth it! I don’t think I have any grounds from which to tell someone who would prefer to live in a poorer, more dangerous, but more human society that their preference is objectively incorrect. Ultimately, looking ahead at the future of AI, I think we’re all going to be called to say precisely where we draw the line — and while I’m broadly pro-progress, there are plenty of trades ahead where I expect I’ll take more freedom over more safety and prosperity.

But if you wave the trade-off away by pretending that Waymos are also dangerous or that they are racist, then we can’t have a real debate. They’re not dangerous. They’re not racist. They’re just part of a world that’s changing much faster than a lot of people would prefer, and — yes — changing in a direction where we see one another less.

That trend bothers me too, though banning Waymos strikes me as a very costly and ineffective way to counter it. I would like a vision of what we’re building toward, not just what we’re preventing. Toward that end, I liked Caroline Sutton’s feminist case for Waymo, and I am glad more people are trying to articulate what they value and whether we’re building toward it or away from it.

But get the research right.

Recommended reading:

Red states get Waymos. Blue states get studies.

When presented with a life-saving technology that would cost taxpayers exactly zero dollars, the D.C. city government chose to delay.

AI could destroy the labor market. We already know how to fix it.

Stop overthinking this. In reality, the most boring, well-established social democratic policy approaches will work perfectly fine to address AI-induced job displacement.

That is, the average model misses light-skinned people 31.05% of the time and dark-skinned people 38.58%, which is a 7.52 percentage point change; it’s incorrect to refer to this as a 7.52 percent change, but the authors do so repeatedly.

Tesla runs a cameras-only sensor suite, so if there were still racial disparities in image recognition, this would affect Teslas. I am much less impressed by Tesla’s safety record than Waymo’s and would venture no particular defense of them, but I doubt they have race-disparity problems because those don’t seem to exist with good modern machine learning systems.

This is from Waymo’s last public update on total miles driven, in March, and its announced 4 million autonomous miles driven per week. It is likely higher, as it has been steadily releasing more cars onto the roads.

(This is compared to the population of all human drivers, but Waymos are mostly displacing Uber and Lyft drivers, who have a lower rate of crashes on average, so this probably slightly overstates their safety impact to date. They also have limited operation on highways, which also probably makes them look safer than miles-driven comparisons suggest).

Kudos for tracking down the original study. In my opinion, it should not be acceptable for a Union of Scientists to write "studies have shown ..." without any references to those publications. This is especially the case when journalists are expected to report on those claims.

Those same arguments against Waymo’s (or any AVs) also ignore the benefits that Waymo’s provide for women and people with disabilities.

As someone who doesn’t (can’t drive), the best thing for me is more availability and cheaper access to all forms of transportation. AVs have long been a dream for many and whether or not a privately owned ride share company is the exact vision of the future, it is a clear step towards progress. Of course, yes, I want fast and reliable and affordable public transit. But I also want my own autonomy—one that necessarily doesn’t rely on as many humans to get me to where I want or need to go.

And of course, almost universally the women I know in San Francisco describe the feelings of safety and freedom in Waymo’s. Of course the vast majority of Uber drivers are fine. But we cannot deny that the chance of a very terrible encounter, albeit “small”, has a very meaningful impact on the freedom (or lack thereof) that women and other marginalized people experience with platforms like Uber. Waymo’s are a way to reclaim that freedom.

And I will further add as a pedestrian with low vision but one who still appreciates cars—6 Waymo’s in a row on your street can be frustrating and just look silly. But at the end of the day, I trust and have experienced that they are more likely to stop for me than many of the human drivers in the city we share. 🤷 forgive me for welcoming this change.