Why hasn't AI cured cancer?

One hundred years of progress, any day now

Americans are not thrilled about AI. With some tech — like Waymos — the more people see them, the more they like them. This is not true — or at least not uncomplicatedly true — of the AI chatbot and agent products from OpenAI, Anthropic, and Google.

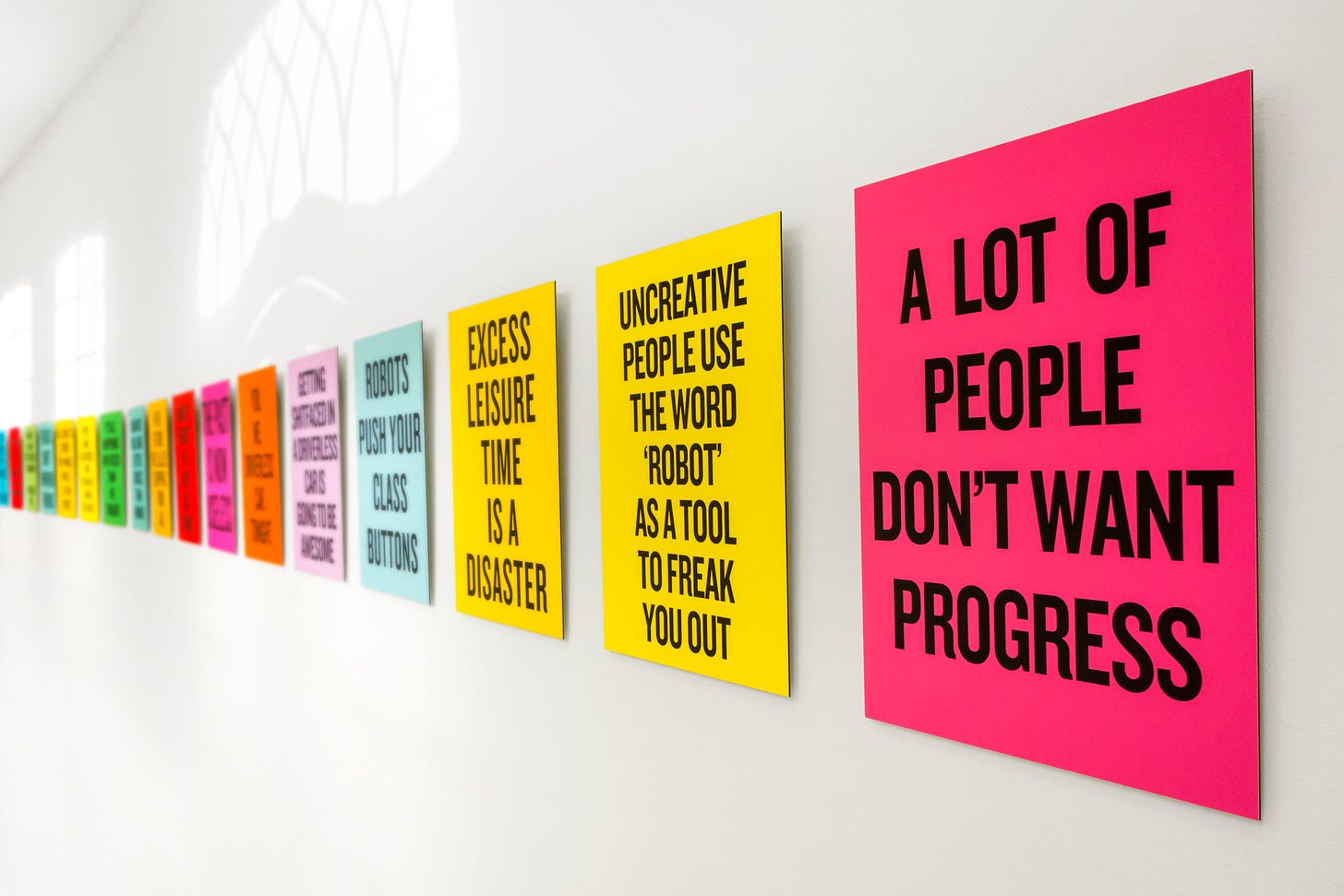

The companies building these products have not done themselves any favors by expressing a mix of pie-in-the-sky utopianism — will automating my job make me better off, Sam Altman? Are you sure? — and dire warnings that AIs might kill us all. Perhaps the most surprising thing is that AI isn’t even more unpopular.

“AI could displace half of all entry-level white collar jobs in the next 1–5 years,” Anthropic’s CEO warned.

“Jobs are definitely going to go away, full stop,” OpenAI’s CEO predicted.

“With the right big idea, you can generate huge amounts of value — but then, [technology] also has this wealth-concentrating effect. And if AGI is built, by default, it will be a hundred times, a thousand times, 10-thousand times more concentrating than we’ve seen so far,” OpenAI President Greg Brockman told me in 2019.

But these risks, the executives said, are justified by the potential benefits, in particular by the hopes of delivering scientific and medical advances to everyone.

When AI’s defenders have to defend to the public why the technology is a good thing, they tend to talk about science.

If we had thousands of superintelligent scientists who could give every rare disease the energy and attention that we give to common diseases, that would obviously be a good thing. If we could speed up the process of invention and discovery and bring the next hundred years of inventions to the table in the next 10 years, that would be hugely transformative.

But that raises the question: How powerful does AI need to be for it to start being helpful with scientific progress? If the whole point of AI is that we’re supposed to be curing cancer and Alzheimer’s and improving longevity, then where are all the receipts?

In some fields, the progress is obvious: AI tools genuinely make coding and data analysis tasks vastly easier and more convenient. I may not like the fact that everyone is using them for writing (I passionately hate it), but it is obviously the case that everyone is using them for writing, and so they are presumably getting some value out of doing so.

But we have, in the social media era, a warning of how AI’s proliferation could turn out: Facebook was meant to connect the world and appears to be quite bad for people. Technological progress has been an enormous force for good in the world, but that is no guarantee that any specific technology will be.

AI is scalable coding expertise and also scalable spam, universal translation and also universal slop. A lot of the predictions by those terrified of advanced AIs are coming true. So it’s fair to check: Are any of the predictions that it will speed up science?

It started with a lie

The conversation about whether LLMs are currently speeding up scientific research began less than promisingly, with a viral and notorious fraud. Just over a year ago, a PhD student at MIT published a paper about the impact of an AI tool on scientific discovery.

The paper claimed that an R&D lab at a large U.S. firm had randomized the rollout of AI tools and found a huge effect: scientists with the AI tools discovered 44% more materials than scientists without them. AI, claimed the author, “automates 57% of idea-generation tasks.”

Keep reading with a 7-day free trial

Subscribe to The Argument to keep reading this post and get 7 days of free access to the full post archives.