Anthropic is somehow both too dangerous to allow and essential to national security

The White House finally found an AI regulation it likes: requiring AI companies to sell it killer robots

When Anthropic reached an agreement with the Department of Defense (DOD) to provide its AI model, Claude, for classified military work, the terms of service included two stipulations: Claude would not be used for mass surveillance of Americans on American soil and Claude would not be used to directly make kill decisions without a human involved in authorization.

Anthropic was already retraining Claude Gov to agree to tasks that it usually refuses to do, like handle classified information, but killer autonomous robots and mass domestic surveillance were lines in the sand.

These might strike you as the most milquetoast rules ever. Some would be opposed to working on national defense at all, or they would object to participating in undeclared wars overseas, or they would object to their tools being directly used to kill. Anthropic, though, very quickly moved into the defense space, becoming the first AI lab to have the infrastructure needed to securely process classified documents.

“Anthropic is committed to using frontier AI in support of US national security,” a spokesman told Semafor last week. “That’s why we were the first frontier AI company to put our models on classified networks and the first to provide customized models for national security customers. Claude is used for a wide variety of intelligence-related use cases across the government, including the DoW.”

Anthropic must now be bitterly regretting all its work to get approved to handle classified material, because it would be much better off if it had never undertaken it.

At DOD, Anthropic’s stipulations against lethal autonomous weapons and mass surveillance reportedly infuriated leadership, who felt strongly that it was inappropriate for any private partner to impose conditions on the department.

Fair enough! The DOD could have rejected the contract and still could choose to do so. But instead of sensibly responding “no deal,” some forces inside the DOD have chosen to pick a fight and to leak its messy details. This escalated the contract dispute into a fiasco that, multiple experts in AI and public policy told me, will probably end up being quite bad for the very national security concerns that supposedly motivated it.

For the last month, DOD has been leaking to Axios and Semafor that it’s increasingly furious with Anthropic about the restrictions and considering how to punish the company if it won’t back down. After a meeting Tuesday with Anthropic CEO Dario Amodei, Secretary of Defense Pete Hegseth reportedly issued an ultimatum: Either Anthropic agrees to design a Claude model that the U.S. military may use for any lawful purpose, or the DOD will take action.

The government laid out two potential paths: Either it would invoke the Defense Production Act (DPA) to try to force Anthropic to train a Claude that will obey military orders, or it would declare Anthropic a “supply chain risk,” forcing any other company that does business with the government to prove that it has cut Anthropic out of its workflows.

The department seems to be leaning toward the former threat: A Pentagon official told CNN that Hegseth “will ensure the Defense Production Act is invoked on Anthropic, compelling them to be used by the Pentagon regardless of if they want to or not.”

An incredibly stupid, counterproductive fight that everyone, including the DOD, will lose

Let me briefly enumerate all of the ways in which the government’s threats are nuts.

First, it’s patently ridiculous to both claim that Claude poses a national security threat and also that it’s so necessary for wartime production that you have to nationalize the company. The fact that the DOD has yet to decide which one suggests that, really, it’s neither. It’s not clear if this use of the Defense Production Act would hold up in court. I’m more confident it wouldn’t get DOD what it wants.

Second, there’s the fact that Anthropic is only in this position because it tried to make its models available for national defense work in the first place. The obvious takeaway for every other AI company is that you should never agree to work with the U.S. military in any capacity, or it might immediately declare you too essential to abide by the contract terms it signed and try to nationalize you.

“When I speak with researchers at other frontier labs, their principles on this are similar, if not often stricter,” Dean Ball, the former Trump official who quite literally wrote the administration’s AI Action Plan, pointed out.

Leading researchers at both OpenAI and Google DeepMind have spoken out against the use of their models for surveillance. But OpenAI and Google, allegedly because only Anthropic has already integrated into certain classified systems, have a convenient out if they don’t want to provide lethal autonomous weapons to DOD.

The companies can agree to lawful uses of their products on unclassified systems. But they can’t be assigned the high-stakes work that Anthropic took on because they simply haven’t set up the infrastructure to handle classified work at all. (And after these events, I somewhat doubt they’ll be in a hurry.)

“It’s really easy for every one of these other companies to talk about all lawful uses when they’re only on unclassified networks,” Mark Beall, president of government affairs at the AI Policy Network, told me. “These AIs are not reliable. All the other AI companies know it too. But because Anthropic was first onto the classified network, they’re the first people encountering these problems, and so they’re the first to get the buzzsaw.”

Third, there’s the fact that the administration has been vehemently, passionately opposed to even the most milquetoast of AI regulation on the grounds that government overreach could strangle the promising technology in the cradle.

Ball argued that the DOD demands “are the strictest regulations of AI being considered by any government on Earth, and it all comes from an administration that bills itself (and legitimately has been) deeply anti-AI-regulation.”

So the people who are worried about crushing America’s AI industry are broadly opposed. But so are the people who favor AI regulation: They generally want regulations to protect displaced workers, require labs to be transparent with the public, or prevent AI from killing us all, not a requirement that the AI be retrained to be ready to kill without oversight.

The threat to designate Anthropic as a supply chain risk is particularly absurd. The DOD could, in principle, try to insist that every contractor who works with the U.S. government demonstrate that they do not use Claude. It’s an extraordinary power that has basically only been used against suspected foreign spyware: for example, Russian antivirus software or various Chinese technologies.

China, a genuine geopolitical adversary of the United States, produces a number of AI models. Moonshot’s Kimi Claw, for instance, is an AI agent that operates natively in your browser and reports to servers in China. The government has taken some steps to disallow the use of Chinese models on government devices, and some vendors ban such models, but it hasn’t taken a step as sweeping as declaring Chinese AIs a supply chain risk.

Some of these models are even directly trained to imitate Claude. Apparently, there’s no need for a supply chain risk designation for these models, though. “Most invocations of the foreign enemy are really invocations of the domestic enemy, episode the latest.”

The threat is very clearly pretextual, an effort to punish Anthropic for not playing ball. But that threat would work a lot better if it were aimed at a company that actually wasn’t playing ball. Anthropic partnered with Palantir — a controversial data analytics company that made its fortune supplying surveillance and targeting software to the U.S. government — and is actively doing a lot of defense work.

The takeaway from this dispute will be that AI companies with any scruples whatsoever should avoid working with the defense industry.

“They’re setting themselves up for a strategic break between the DOD and the AI companies at a time when they need to be working closely,” Beall told me.

Anthropic’s leadership was already under some internal pressure over the deal with Palantir. Even if leadership wanted to back down here — and they have reiterated that they don’t — their employees are generally deeply invested in the company’s mission, which is at odds with training Killer ClaudeTM. Many of the rank and file literally believe that the fate of the world depends on their not doing so.

At best, the Pentagon might just use the Defense Production Act to declare itself unbound by Claude’s terms of service, allowing it to declare victory without changing anything substantive; at likeliest, the whole thing is tied up in litigation for the next several years, limiting Anthropic’s ability to IPO as planned; at worst, DOD could probably wreck the company. But Anthropic has made it very clear it won’t budge, and I can’t see any plausible outcome that actually leaves Americans safer.1

Nobody wants this

It’s hard for me to think of a single constituency that would be happy about this fiasco, and indeed, DOD’s demands are deeply underwater with every single group polled, including Trump voters — only 28% of people who voted for Trump in the last two elections were in favor.

If you hate AI, you don’t want the Pentagon to seize control of it.

If you like AI, you probably don’t want the Pentagon to profoundly damage the industry and wreck investor confidence with a sudden nationalization.

If you want AI deeply enmeshed with the Pentagon, you’re probably worried this reduces the incentive for companies to work with DOD.

If you are against AI regulation, you probably don’t love the government abruptly deciding to threaten to choke an industry leader to death for no real reason.

If you’re in favor of AI regulation, you probably didn’t want the first major regulatory action to be: “You must build a killer robot.”

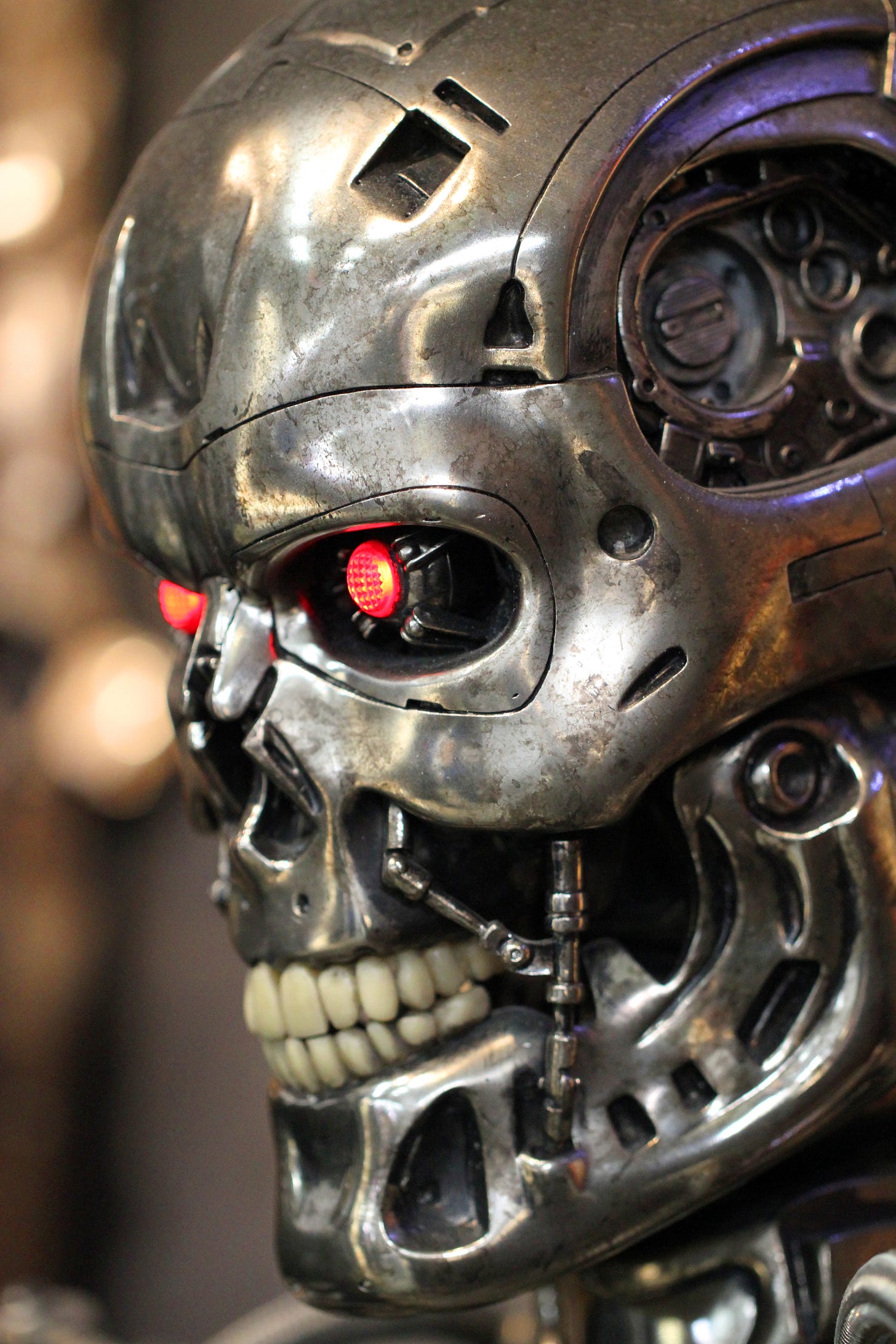

If you’re afraid of killer robots, you’re probably concerned that the government is trying to force a company to let them have Skynet.

Finally, the stupidest thing about all of this is that, as I read it, Anthropic is, in fact, willing to, eventually, build killer robots. In the defense space, people have been increasingly convincing themselves that we’re going to need killer robots at some point. Wars in the near future might be fought with autonomous drone swarms; those swarms might simply not have time to check in with humans between strikes. In certain circles, “we’re going to build killer robots pretty soon” has become an accepted fact.

(If this entire line of argument makes you increasingly worried that AI is going to turn on its creators and kill us all, well, welcome to the club.)

Anthropic has not ruled out building an LLM that could be used for lethal autonomous warfare. “Amodei’s public position,” Ball observed, “is essentially that autonomous lethal weapons controlled by frontier AI will be essential faster than most people realize, but that the models aren’t ready for this *today.*”

This is straightforwardly true. Claude is a best-in-class agentic model and a best-in-class writing and planning model, but in my experience, it is among the worst of the major labs at visuospatial tasks.

Anthropic hasn’t trained an image model, and Claude is frequently handicapped in UI work by its extremely poor spatial visualization. You couldn’t use Claude to power a killer bot right now even if you wanted to; it would be a danger to our troops and fairly useless at our objectives.

Being generous to everybody involved, we’re fighting over this capability that Claude doesn’t even have because everyone involved feels that deeply held principles are at stake.

The Pentagon doesn’t want to accept a partnership in which a private company imposes terms and conditions it believes only the U.S. government has the right to set. Even if the terms and conditions are irrelevant and in no meaningful way limiting, it is intolerable for a company to constrain the government.

It’s a power play. “Anthropic could be saying ‘you can’t nuke the moon’ and we’d likely be in the same position,” one AI policy expert told me. It’s not that the specific terms are intolerable, it’s the very concept of the company having terms.

On its side, Anthropic is acutely aware that a powerful-enough AI could, in fact, end American constitutional government as we know it. (A government that does not have to maintain the consent of the governed, which can act with only the support of AIs, sounds like a government unmoored from most democratic accountability. Increasingly, the models will also be taking their own actions independently in the world, and there’s no plan to hold them accountable.)

Anthropic is made up of workers who were worried that OpenAI was not handling AI ethically enough, and making a “good” AI is its entire raison d’etre. Of course it won’t compromise on a matter of principle just because it doesn’t yet make a huge difference in practice!

Let’s be a little less charitable for a moment.

What you have here is an AI company that has branded itself as the good guys — focused on bringing about superintelligence aligned with human interests. This company went out of its way to do national security work for the U.S. government and only belatedly learned what anyone could have told them a year ago, which is that you can’t pick and choose what work you do for the Pentagon.

Reportedly, there were a number of people at Anthropic who had reservations about the partnership with Palantir. I assume they are saying “I told you so” approximately every 30 seconds this week.

And for its own part, the DOD has worked itself up into a righteous fury that now endangers its access to a valuable new partnership and all future partnerships, all while generally making itself look like a bunch of thugs in the press.

The victims here are American innovation and investor confidence, along with everyone in the American public who wants an effective military but does not want it to spy on Americans or run on, not just killer robots, but hallucinating killer robots.

Important exception here: if you think Anthropic was about to destroy the world, maybe all of this is great news? Maybe going around killing off America’s AI labs by trying to demand they retrain the AIs to be more murderous and failing to get them to do it is the only way to stop the AI race? I feel obliged to acknowledge this line of argument, since I take seriously the case that Anthropic and its competitors are bad for the world. But I think a much likelier consequence of this behavior by the government is for AI labs to be much more reluctant to engage with the government, much less inclined to build partnerships, and much more inclined to work in secrecy. So I bet this is bad news even if you think Anthropic is itself bad news.

"only belatedly learned what anyone could have told them a year ago, which is that you can’t pick and choose what work you do for the Pentagon"

This is a good line, but it's clearly not true? Millions of people do work for DoD in some form or another and no-one thinks that agreeing to sell toilets to military bases renders you liable to being conscripted into making bombs. Maybe Anthropic should've been more alert to the possibility of a Trumpified DoD pulling some shit, but the norm breaking (and, I think, law breaking) is all on one side.

It's unclear to me if David Sacks objects to this outcome: he's been vocal about his hostility towards Anthropic in the recent past, and has so far avoided any comment talking about this (he's spent much more of his Twitter time recently denying that Trump and Musk had any involvement in Epstein's crimes, for unclear reasons).

It's unclear to me if Stephen Miller objects to this outcome: certainly Katie Miller has been very vocal about opposing "woke AI companies", a standard which for her seems to include OpenAI, and Stephen Miller's immigration policies have done a tremendous amount to damage every AI lab.

Elon Musk probably benefits from the fight, even if it's a bit embarrassing to have Grok available and everyone involved dismissing it as inferior to Claude.

I think everyone who wants America to have a strong AI industry overall thinks this is bad. But that doesn't obviously describe multiple important figures in the Trump administration.